Edge AI chips: Edge computing is transforming the design of IoT devices

In this era of rapid technological advancement and the prevalence of artificial intelligence, AI is gradually moving from the cloud to the edge of devices. With the continuous increase in the number of Internet of Things (IoT) devices, relying solely on cloud processing for data is no longer sufficient to meet the requirements of real-time response, low latency, and data privacy. Edge AI chips are dedicated processors that perform AI inference locally on terminal devices. Their core features are low power consumption, low latency, and high real-time performance, enabling devices to make intelligent decisions without relying on the cloud. Edge AI chips are gradually becoming a key component in the design of IoT devices, from smart cameras to industrial automation. They are driving the development of the next generation of intelligent hardware.

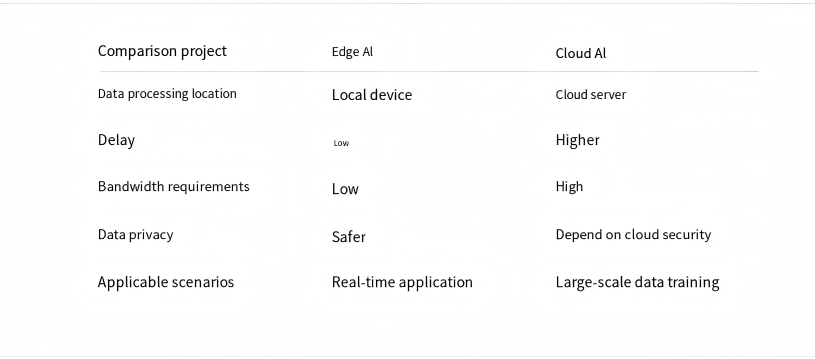

Edge AI chips and cloud AI chips

What are Edge AI chips?

Edge AI chips refer to the deployment of artificial intelligence algorithms directly on devices at the network edge, without relying on remote cloud servers for data processing. Running trained AI models to complete local inference tasks such as image recognition, voice interaction, anomaly detection, and autonomous driving perception.Common Edge AI devices:

- Smart security cameras

- Industrial monitoring equipment

- Automated robots

- Wearable health devices

What are cloud AI chips?

Cloud AI chips are high-performance AI chips deployed in data centers for cloud computing. Their main tasks are AI model training and large-scale AI inference. Pushing massive amounts of data to the model for learning because it requires a lot of computing power, so usually several hundred or thousands of chips need to run together. Then, the trained model is placed in the cloud, and when the customer sends a request, the cloud calculates and returns the result.The differences between Edge AI chips and cloud AI chips:

Edge AI: Focuses on low power consumption, low latency, local processing of data, deployment at the terminal, protection of privacy, and saving bandwidth.Cloud: Places emphasis on high computing power, high throughput, high concurrency, and model training, but has high power consumption and is deployed in data centers.

Edge AI in IoT Devices

The Role of Edge AI in IoT Devices

Edge AI can address the problems exposed by the increase in IoT devices in the traditional cloud computing model.1. Reduce latency

In industrial automation systems, if a device malfunctions, it needs to be detected and processed within milliseconds. If using the traditional cloud computing model, all data would be sent to the cloud and the results returned, which often leads to latency. However, Edge AI chips can directly perform calculations on the device side, enabling decision-making implementation.

2. Reduce network bandwidth pressure

IoT devices generate massive amounts of data every day. Transmitting the data to the cloud for processing would consume a large amount of network bandwidth. Edge AI can perform data processing and analysis locally, significantly reducing the demand for bandwidth.

3. Enhance data security and privacy

Many IoT devices involve sensitive privacy data. Edge AI can process data locally, reducing the need for data transmission and improving system security.

4. Improve system reliability

Edge AI can complete critical computing tasks locally, ensuring that the device can operate normally even in unstable network conditions.

Common Edge AI Chips in IoT Devices

Many low-power, highly integrated, and AI-accelerated Edge AI chips have been put into use in IoT devices:- ESP32-S3: Widely used in smart home and IoT devices, supporting AI voice recognition and simple visual processing.

- Kendryte K210: Built-in neural network processor (KPU), suitable for image recognition and machine learning applications.

- STM32H7: High-performance MCU platform, capable of running embedded AI algorithms.

The Future Development of Edge AI

With the advancement of technology, the continuous maturation of artificial intelligence and semiconductor technologies, Edge AI chips are evolving in the direction of lower power consumption, higher computing power, smaller size, and higher integration. In the future, Edge AI will become one of the core technologies in the design of intelligent devices and IoT systems.Common FAQs

1. Why is Edge AI important for IoT devices?- Real-time data processing

- Reducing network bandwidth consumption

- Improving system reliability

- Enhancing data security and privacy protection

- Integrated AI accelerator (NPU)

- Supports the running of machine learning models

- Highly efficient data processing capability

- Suitable for embedded systems